The Coming Transformation

Prediction: By 2040, 50-60% of jobs automated Reality check: AGI doesn’t eliminate humans—it concentrates human value

The question isn’t whether you’ll have a job in 2028. It’s whether your job will be one that AGI amplifies or replaces.

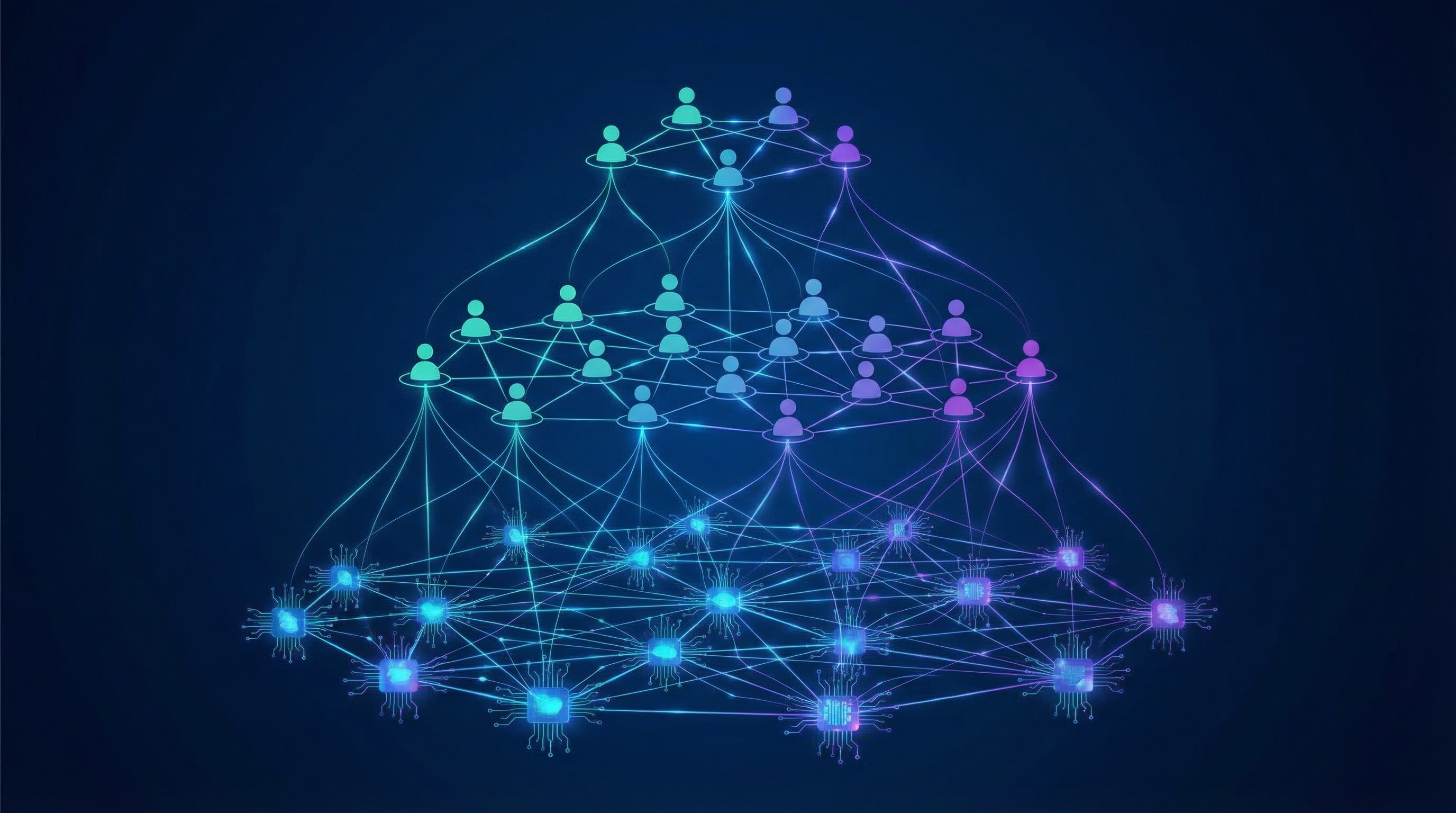

Part 1: The AGI-Powered Company Structure

Before vs. After

| Aspect | Traditional Company (2025) | AGI-Powered Company (2028+) |

|---|---|---|

| Size | 100-10,000 employees | 10-100 humans + 100s of AI agents |

| Layers | 5-10 management levels | 2-3 levels |

| Decision speed | Weeks-months | Real-time |

| Cost structure | Human-labor heavy | Compute-heavy |

| Competitive advantage | Scale, capital | Speed, adaptation |

The One-Person Organization

What becomes possible:

1 CEO + 10 AI Agents + Cloud Infrastructure = $10M Revenue Company

Not hypothetical:

- Solo founders already building $1M+ revenue businesses with AI

- By 2028, the ceiling rises to $10M+

- By 2030, $100M+ solo companies become possible

But: This doesn’t mean companies get smaller. It means they get more ambitious.

Part 2: The Humans Who Still Matter

Category 1: Strategic Leadership (Amplified, Not Replaced)

CEO/Founders:

| Task | Who Does It | Why |

|---|---|---|

| Vision & values | Human | AGI can’t define purpose |

| Strategic direction | Human + AGI | Human sets goals, AGI optimizes |

| Stakeholder relationships | Human | Trust is human-to-human |

| Crisis decisions | Human | Accountability requires humans |

The CEO-AGI partnership:

CEO: "What are our options for entering market X?"

AGI: "Here are 47 scenarios with probability distributions..."

CEO: "What about the risk to our culture?"

AGI: "That's not in my training data. That's your call."

Why CEOs survive:

- Accountability can’t be delegated to AI

- Stakeholders (investors, customers, employees) want human commitment

- “The buck stops here” requires a human

C-Suite Evolution:

| Role | 2025 | 2028+ |

|---|---|---|

| CFO | Financial modeling, reporting | Strategy + AGI oversight |

| CTO | Technical roadmap | AI integration + risk management |

| CMO | Campaign management | Brand vision + AI coordination |

| CHRO | Hiring, policy | Culture + human development |

The shift: From doing tasks to directing AI agents that do tasks.

Category 2: Creative & Strategic Roles (AGI-Augmented)

AI Strategists / Prompt Engineers:

What they do:

- Translate business goals into AGI instructions

- Optimize AGI outputs for specific contexts

- Design human-AGI workflows

- Maintain AGI alignment with company values

Why they matter:

- AGI is powerful but not self-directing

- The gap between mediocre and excellent AI use is human judgment

- Competitive advantage comes from better AI utilization

Content Creators & Designers:

The evolution:

Before (2025): Creator writes drafts, edits, publishes

After (2028): Creator directs AGI, curates, adds human perspective

What remains human:

- Taste and judgment

- Brand voice

- Emotional resonance

- Cultural context

R&D Researchers & Innovators:

The AGI advantage:

- AGI processes literature in hours (humans: months)

- AGI runs thousands of experiments in parallel

- AGI identifies patterns humans miss

The human role:

- Frame the right questions

- Judge which findings matter

- Navigate ethical implications

- Build on intuition that AGI can’t replicate

Category 3: Ethics, Oversight & Human-Centered Roles (New Demand)

AI Ethics Specialists / Auditors:

Why this role emerges:

- AGI systems can produce harmful outputs

- Regulatory requirements for AI oversight

- Public trust depends on demonstrated responsibility

- AGI alignment is an ongoing process, not a one-time fix

What they do:

- Audit AGI decisions for bias

- Ensure compliance with regulations

- Review AGI behavior in edge cases

- Translate ethical principles into AGI constraints

Data & Cybersecurity Specialists:

The new reality:

- AGI systems are high-value targets

- AGI can be weaponized by adversaries

- Securing AGI infrastructure is critical

- Managing AI supply chains

Human Relationship Managers:

What AGI can’t do:

- Build genuine trust

- Navigate complex emotions

- Handle high-stakes negotiations

- Provide human connection

Where humans remain essential:

- Key account relationships

- Complex sales

- Team building and culture

- Crisis management

Category 4: Roles That Disappear or Shrink

Basic Developers / Programmers:

The prediction: AGI writes 95% of code by 2028

What remains:

- Architecture decisions

- Code review and approval

- Novel algorithm design

- Security-critical systems

Administrative & Clerical Roles:

What gets automated:

- Scheduling

- Data entry

- Document processing

- Basic correspondence

What remains:

- Complex coordination

- High-stakes communication

- Discretion and judgment

Junior Analysts:

The AGI advantage:

- Faster data processing

- More comprehensive analysis

- No fatigue or errors in routine tasks

What happens:

- Junior roles shrink dramatically

- Entry-level becomes mid-level

- On-the-job learning becomes harder

Part 3: The New Company Structure

Layer 1: Strategic Humans (5-10% of workforce)

Roles:

- CEO/Founder

- C-Suite

- Senior strategists

- Key relationship holders

Responsibility:

- Set direction

- Make high-stakes decisions

- Maintain accountability

- Define values and culture

Layer 2: Tactical Humans (15-20% of workforce)

Roles:

- AI strategists

- Domain experts

- Creative directors

- Ethics specialists

Responsibility:

- Direct AGI agents

- Ensure quality and alignment

- Bridge human and AI systems

- Maintain competitive advantage

Layer 3: AGI Agents (75-80% of “workers”)

Types:

- Research agents

- Analysis agents

- Creative agents

- Operational agents

- Communication agents

The critical insight: AGI agents don’t replace Layer 1 and 2 humans—they amplify them.

Part 4: The Adaptation Framework

For Individuals

Skills that gain value:

| Category | Examples |

|---|---|

| Strategic | Vision, prioritization, judgment |

| Creative | Original thinking, taste, curation |

| Interpersonal | Trust-building, negotiation, empathy |

| Ethical | Values, principles, responsibility |

| Technical (new) | AI direction, prompt engineering, oversight |

Skills that lose value:

| Category | Examples |

|---|---|

| Routine | Data processing, templated work |

| Information | Retrieval, summarization |

| Analysis (basic) | Standard patterns, routine insights |

| Technical (old) | Manual coding, system administration |

For Companies

Immediate actions (2026):

- Map which roles AGI can amplify

- Identify strategic human roles

- Begin AI integration experiments

- Develop human-AI workflows

Medium-term actions (2027-2028):

- Restructure for human-AGI hybrid

- Invest in AI direction skills

- Build ethics and oversight capabilities

- Create new roles for AGI coordination

Long-term actions (2028+):

- Scale with AGI, not humans

- Maintain human accountability

- Focus on judgment and relationships

- Stay adaptable to AGI evolution

For Society

The challenge:

- Fewer workers needed for same output

- Wealth concentration risk

- Transition pain for displaced workers

- Purpose crisis for those without roles

The opportunity:

- Productivity explosion

- New types of value creation

- More meaningful work (less drudgery)

- Faster problem-solving

The Takeaway

AGI doesn’t eliminate human value—it concentrates it.

The humans who thrive after 2028 will be those who:

- Direct AGI rather than compete with it

- Provide judgment that AGI can’t replicate

- Build relationships that require human trust

- Take responsibility that can’t be delegated

- Create meaning in an AGI-powered world

The company of 2028: Smaller, faster, more ambitious—and still fundamentally human at its core.

Sources

Related Posts

- The Next 3 Years: AI Agents Take Over — Part 1

- When AI Joins Democracy — Part 2

- AI Agent Registration — Part 3

This is Part 4 of the AI Future Series. The series explores the next 3 years of AI development and its impact on society, governance, and work.